Kolat’s creative process often involves the translation of documents and data into musical parameters. For example, Halki/Heybeliada is based on a piano piece by Turkish-Armenian composer Edgar Manas (1875–1964). To make this work, Kolat “translated” a recording of a piano piece by Manas into an alphabetic stream of data from which he then removed all instances of the letters s, o, y, k, i, r, and m. These are the letters in soykırım, the Turkish word for “genocide.” Next, Kolat transferred the edited data stream back into a sound file, and that sound file, riddled with gaps and garbled moments, then served as source for a composition.

As concerned as Kolat is with the suppression and distortions of cultural memory and history, he is first and foremost a composer and researcher exploring conceptual and perceptual experiences of sound through experimentation with a range of digital tools, whether that is by designing and programming algorithms, translating data into musical parameters, or re-inventing notation that challenges performance practices.

My conversation with Kolat began in May 2017 at the Millay Colony for the Arts, in Austerlitz, New York, where we were both residents, and the conversation continued over Skype in August. As someone outside the field of experimental music, my questions came out of my work as a poet, with an interest in creative forms of translation, and as an art critic, with a curiosity about his scores, some of which appeared (to me) as enigmatic images.

For Messenger of Sorrows, you work with two sources: a Kurdish folk song and the 2015 recording of a rocket hitting a Kurdish house. Can you talk about your process of working with these materials?

In this piece, there are two musical processes going in tandem. One process is inspired by Alvin Lucier’s piece I Am Sitting in a Room (1969), a work that graphically materializes the relation between sound and space. Lucier sat down and read a text, recorded it, played that recording and recorded it, repeating the process again and again until only a few frequencies are heard, which are the resonant frequencies of Lucier’s room.

What I did was to reverse Lucier’s process. However, rather than playing and recording sounds in a room, I repetitively used a convolutional process to achieve a similar transformation. Convolution allows one to combine a recording documenting the way sound behaves in a space with another sound. When you do that, you are able to simulate a space. (For example, there are commercial software programs which can be used to make a piece sound as if it was recorded in Notre Dame Cathedral.)

Normally, what you do to capture the characteristics of a resonant space is to play a sound, such as a balloon pop or a sweep of a sine tone, in that space, and then record the reflections of the sound. This provides information on the space’s response to the impulse, fittingly called an impulse response. What I was trying to do, though, was to create a narrative of an impossible space, as if I were able to capture the impulse response of that room as it was being destroyed, only the impulse this time is the blast of a rocket.

The recording of a folk song performed by Aynur Doğan underwent the convolution process along with this imaginary impulse response. Then, I repeated the process many times until I ended up with only a single resonating frequency. I didn’t play the folk song in a room; all work was done digitally. There was a narrative going on in my mind: it was as if the sorrowful inflections of this folk song were absorbed by the walls of the wrecked house, and the inflections were slowly being retrieved during the process.

Did you know the folk song growing up?

No. Growing up in the nineties, signs and items that belonged to the Kurdish identity were considered taboo; even the language was banned. If you were from that cultural sphere, you wouldn’t have any chance to express your cultural identity. I am not Kurdish but, growing up, the vibrations of the suppression and violence were around all of us. While my piece is directing attention to one act of violence, it wasn’t the only one.

You mentioned there were two musical processes going on in tandem. There is the one inspired by Lucier. The other has to do with live performance, that the performance, as you put it in an earlier conversation, “changes the sound that is performed in real time,” because the score is asking the musicians to be more improvisational.

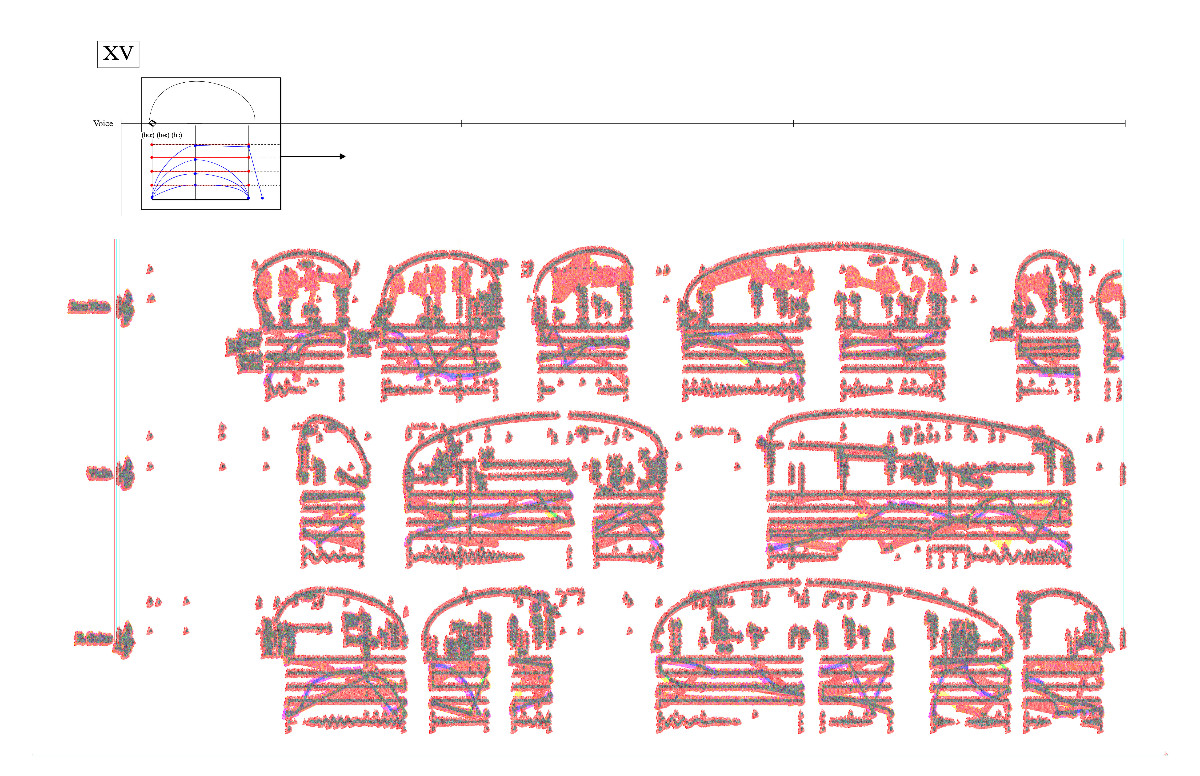

Exactly. The score is becoming more illegible.

The less legible it is, the more improvisation you want?

Yes. Initially, certain small elements become illegible. You can’t tell if the note is C natural or C sharp, but you can see C. Later, you can’t tell if the note is C or D, so you play something there. Then the score gets even more fuzzy, so the improvisation becomes freer and freer.

I find it interesting that, instead of giving instructions, you simply adjust levels of legibility. You continue to make marks, but they are marks that don’t mean anything . . . no, wait, is that right? The marks don’t mean anything?

The idea was to tamper with the brain-wiring of a musician who is trained in the Western tradition. I tried it with myself first. How would I play this? What does it mean to be illegible? How would I respond to that? I can’t read that, so I’m putting something there. What you can’t read changes, though; at first, there are small details that are illegible, but then the entire structure becomes unreadable.

I especially didn’t use any material that would stand out—such as a motif. The musicians did something very interesting, though. The musicians were improvising but, at some point, they started repeating certain gestures so, as you listen to the piece, your working memory starts connecting the gestures. That is exactly how a traditional composition framework works. The quintessential example is the so-called “fate motif” of Beethoven’s Fifth Symphony: Da-Da-Da-DUM. He develops the motif, he changes it, he transposes it; it’s omnipresent, and our working memory follows its reappearances. Well, during the performance, these guys did a similar thing. Eventually, the “composed” part sounded improvisational, while the improvised part sounded like a composition, in a more conventional sense, of course.

This shows that the dichotomy between composition and improvisation doesn’t really exist at the perceptional level. Moreover, gray areas do exist between the two. Someone might ask, why did you even write this notation; people could just play whatever they wanted, so why write notation? That question comes from the preconception that the relationship between composition and improvisation is binary. I am happy to see that this is not true, and many possibilities exist in the gray area between organized and spontaneous idioms.

You were trained as a classical pianist, and I’m wondering how your experience of performance has influenced your work as a composer of experimental music. Does the difference between performance and composition have to do, in part, with a different kind of attention to sound and, if so, how did your attention shift?

The pianist’s position is quite different than that of many other musicians. Most musicians have intimate relationships with their instruments. For one, most use the same instrument; the flutist takes her flute to the performances, whereas the pianist performs on a different instrument on each occasion. We don’t tune—I’m sure there are pianists who have the training to tune their instrument, but, commonly, we don’t touch anything other than the keys—whereas, for example, the clarinetist prepares her own reed. To use a software analogy, a pianist is like the end user.

That implies a “distance” which is also reflected in the human-instrument relationship. Think about the mechanism of the piano. A flute is based on a mechanism, too, but the action is more intimately connected with how sound is made. Or a violin: the fingers are directly touching the strings. The timbral palette these other instruments have—especially the string instruments—is incomparably richer than that of the piano. We don’t have much timbral control on the keyboard. Different timbres are possible only when you get into a different ergonomic situation like reaching to the strings, a totally different performance practice.

Being a pianist initially limited my mind in terms of how I perceived sound as an entity. Sound consisted of noteheads. Sound was two-dimensional, pitch and rhythm, conveniently mapped into subdivisions of beats and equal temperament. A different kind of attention to sound came much later. It required more intellectual clarity on how sound works, how resonance works, how we perceive sounds, how we make sound, mechanically or electronically. This clarity came much later, during my graduate studies.

When you went to the University of Washington, had you already made that move from performance to composition?

I was on my way. One thing I remember is that I was still looking at things from a rather striated point of view: I am going to use Technique 1 here, and Technique 2 there . . . What I do now is more about the gray areas between techniques, between parameters of musical performance. If three parameters—say, bow pressure, bow position, and finger pressure—appear in particular intensities, they create a particular sound, and there’s a name for that particular timbre. But what if only two of them get to that point and one is not there? Now you get a different thing which you can’t predict. A sense of fluidity stems from this. So, from the attention to how musicians play/sing, how they make sounds, came new perceptions which weren’t available to me earlier.

Halki/Heybeliada is a piece that really interests me because it involved the translation of a sound file into alphabetic data, and then editing that data (like one might a text) before re-installing it back into a sound file. Can you explain the thinking behind this piece?

Halki/Heybeliada is an homage to Edgar Manas, a Turkish-Armenian composer who is important to the history of contemporary music in Turkey; he was one of the pioneers. He was a very well-trained composer who, surprisingly enough, orchestrated Turkey’s national anthem. That Turkey has a national anthem orchestrated by a composer of Armenian heritage indicates how tightly knit these two cultures are . . .

I recorded myself playing Manas’s piece “Les îles des princes.” Then, using a program, I opened the recording as a stream of raw data, encoded in a stream of characters. Once I had the set of characters, I found all the o’s, all the y’s, all the s’s, and I removed them. The idea was to remove the letters that spelled the Turkish word for “genocide.”

I removed “genocide” out of that set of information, then I put the data stream (minus the selected letters) back into the sound file, ending up introducing a distortion to the recording of Manas’s piece. Finally, I used that distorted recording as source material.

The theme of distortion ultimately refers to my own perception of early twentieth-century history as someone growing up in Turkey—a flawed, distorted perception from which “genocide” was removed.

Tell us about your new piece Shōbute, premiering in Tokyo in October.

Shōbute is a piece for Noh voice and piccolo, commissioned by Ryoko Aoki. Trained in classical Noh theatre, Ryoko-san is a fascinating figure who often collaborates with different composers to create a contemporary repertoire that involves Noh voice.

The piece is based on the game of go. I am interested in what’s going on in artificial intelligence, and AlphaGo beat a world champion last year, a monumental achievement because go is an extremely complex game. The possible number of go games on a 19x19 board is arguably more than the number of atoms in the universe.

Shōbute is a go term. It is a move you make, a very risky move, when you are falling behind. It means something for master players; I’d think they would respond to the move emotionally, but the machine would not have that kind of response. So shōbute represents an unconquered aspect of go for AlphaGo, even though it beat a nine dan master, it didn’t do it through human experience, through getting stressed about a risky move. Shōbute is a symbol of human experience.

There are two layers in the composition: the AI layer, and the human layer. The AI layer uses data taken from a game log for the first Google DeepMind Challenge Match game. I mapped the moves to musical parameters, and I applied this data directly to musical notation using a notation software I wrote for this project.

The human layer appears on top of the former layer: it spontaneously alters it, amplifies it, and is affected by it. The dramatic structure (and the text) are also shaped by some heated commentaries on the go game by two go masters. Those commentaries are very interesting. The masters might say, “A big battle is about to begin! [Laughs] The go masters were so intensely reading the game. As these commentaries described the game, they were automatically drawing a dramatic curve for me. Of course, I didn’t follow the commentaries linearly, from beginning to end. I selected; I cut.

Let me make sure I understand. The title of your work Shōbute refers to a last-ditch go move, one that risks all. This is an all-or-nothing move that a go player makes in desperation, when he has nothing left to lose. It is most likely an emotional moment for the player. For AlphaGo, however, shōbute is nothing more than a calculation. In your piece, you are playing with the contrast between human involvement in a game and computer calculation. Do I have this right? Can you expand on your interest in machinic vs. human decision-making, and how this contrast plays itself out in your composing process?

Players tend to use the term when they themselves are not sure if their move is a good strategy or not. You’re putting a lot on the line. If you know your move is good, you say, “Good move.” If you did a bad move, you say, “Bad move.” But the shōbute move is fuzzy. While it wouldn’t be fair to lean on an old, stupidly linear robot vs. complex human mind analogy, considering the current research on AI, it should still be safe to say that shōbute doesn’t exist in current “machine language” due to its emotional content.

AlphaGo would never make a shōbute move?

Maybe it would. For a human to make it, though, brings a sense of uncertainty. Maybe the next moves won’t be that good, or maybe the player will be better for having taken the risk . . . Considering this, I guess the overall theme for this project is certainty versus uncertainty.

Does uncertainty exist for AlphaGo? I am not sure, but AlphaGo was definitely unpredictable at some level. After the games, those involved in the programming of AlphaGo would comment, “We don’t have any idea why AlphaGo made this move, no idea.” So, the process of programming AlphaGo didn’t simply involve a conditional framework like, if this happens, do this. This would be impossible to program because the game is so complex.

Uncertainty plays an important role in my piece. The performance parameters in the music, even the ones that are simply extracted from data, are bound to create unpredictable results because they will be “translated” by the musicians. I don’t know how they will respond during the performance; there are so many different parameters that could differ from one performance to another.

Shōbute works with not only the ancient board game of go but also with the Noh vocal tradition. Could you tell us more about your inspiration for this work? Are you inspired by Japanese traditions?

This is my third time writing a piece inspired by Japanese culture, but this kind of inspiration carries certain risks. It is very easy to slip into the trap of cultural appropriation, ending up with yet another work that unsuccessfully attempts to simulate an ancient aesthetic. The aesthetics of Noh and tea ceremony definitely inspire the piece; however, I have to be very careful about how that inspiration is reflected in the work.

This project is especially risky because it’s for Noh voice—by using that characteristic timbre, I’m automatically within a certain part of the Japanese cultural sphere. I started thinking, how can I escape from this vortex? How can I find another medium that is still a part of the Japanese culture but doesn’t necessarily put me in a situation where I am trying to simulate the aesthetic of Noh as a foreigner?

Working with the game of go came out of that questioning. The game of go doesn’t have anything to do, as far as I know, with Noh. I needed that difference. It’s made me work better.

I have seen the appropriation taking place in my native cultural territory, too. I have witnessed some American artists taking on Rumi’s poetry, for example, and to my horror I’ve seen that some of these approaches were using a certain New-Agey interpretation of Rumi’s poetry that cuts out every allusion to Islam or to the cultural sphere in which he lived. I have seen many good intellectuals fall into that trap. It is very easy to fall into that trap.

So composing this piece for Noh voice and piccolo is your shōbute move?

[Laughs] Yes, indeed.

My understanding of experimental music (and my knowledge is limited) is that experimental music privileges conceptual processes—intellectual play and the testing of limits—over an aesthetic experience. Experimental music is playful and not particularly emotive.

Your works, too, are incredibly inventive in terms of their complex engagement with multiple processes, but works such as Messenger of Sorrows and Echoes of Tinder are also moving critiques of historical memory and collective trauma.

Can you speak to the relationship between experimentation and political engagement in your work?

Thank you for thinking these two elements exist in what I do. But politically engaged artworks take risks, too.

Let’s start with your description of experimental music: it is indeed a problematic term. Yes, often playfulness comes to the surface, and indeed emotiveness is either hidden or doesn’t exist. Let’s say, you have a new tool, or just a way to play an instrument, and you experiment. At some point, our attention goes from how do you do this, how did you get this sound or that result, to what do you do with this? I believe emotiveness is related to that second question. I would even use the term “expression.”

As far as political engagement in my works is concerned, there is always a possibility that I could be accused of tackling a given issue to guarantee an emotive response or, even worse, publicity. How could you prove otherwise?

To keep myself honest, I try to find ways to embed attributes of a topic within the structure of the music. Even if you don’t know what the piece is about, the topic exists within the structural elements; it is there, but I am not necessarily asking you to be aware of any narrative.

On the other hand, as a person who grew up in Turkey, being apolitical was not a choice, neither for me nor for my friends. That doesn’t mean that everyone was deeply knowledgeable about political history or theory; it was just something that you could not ignore. Now I see that the climate is changing here [in the US], after Trump’s election. Now, many creative people I know who have never considered tackling political themes are feeling a need to respond. And that’s about it: I have to say something; I don’t care how many people will hear it, or how they will react to it, I just have to put it out there.

At Millay, I was fascinated by your discussion of your composing process because it seemed like your use of data and algorithms was a form of translation play. Now, after talking to you further, I confess I may have confused your use of various digital tools with composing.

Let me try this again: you work with varied source materials (folk song, games, painting, historical trauma) and your tools are equally varied, from convolution techniques to algorithmic processes. Your source materials are reworked by your given tools and this then results in a territory (your word) or arena (my word) in which you then assemble your musical “object.”

Am I close? Can you say more about the relationship between source material and tool? The relationship between “territory” and “composition”?

I like your use of the term “arena.” It is perfect. It is closer to how I feel about territory because it implies a stage for performance. Basically, any of the tools mentioned are just tools to set up that stage on which some expressive thing goes on. The nature of the stage depends on the project, of course.

In my experience, I’ve seen examples where only the stage is set. The stage may be set in a very masterful manner but, still, this is only the setup. During the event, or during the experience, I’m looking at this wonderfully constructed stage, but nothing is actually going on. So, something expressive, something communicative, needs to happen beyond that territory, at a different plane of existence almost.

Again, the basic question is: okay I did this, this is ready, these tools, these ideas, but what am I going to do with them? That “what” I take as the subject of composition.

For any artist, the way you create your tools may be quite impressive, quite dramatic; it may even involve robotics—you set up machines doing things on the stage—but, again, at the end, it is all about what they are doing, what expression are we receiving from them. That’s the subject of composition for me.

You mention translation. After I read about your work, I thought of the Turkish poet Can Yücel. (It is difficult to explain without sharing original texts.) He translated some of Shakespeare’s sonnets using idioms; for example, using a decidedly colloquial Turkish. Shakespeare’s sonnets are transformed into something completely different, but you can feel Shakespeare; it is as if a reincarnation of Shakespeare appeared in Anatolia and talked about the same things. But Yücel’s works are not translations; there is almost no direct correlation. That’s like the stage and the performance for me. The stage is the linear, word-by-word meaning of the sonnet. The sonnet itself is the stage but there’s an extra thing, an extra expressive thing that differs from more conventional translations of Shakespeare which makes the particular work a thing in itself.

Your works actively engage with, and generate, varied representations and experiences of space, such as the destruction of a house in Messenger of Sorrows, the deliberate torching of a hotel in Echoes of Tinder, and perhaps also the gridded space in the board game of go. Can you talk about your explorations of different spatial concepts—acoustic space, symbolic space, performance space? For example, how do you work with the difference between representing space and creating space, or the difference between the space in the music and the listener’s space?

Acoustic space is perhaps the fourth dimension of music, if we consider pitch, rhythm, and timbre as the first three dimensions. However, my engagement with acoustic space itself is currently limited to the theoretical realm for practical reasons. Nevertheless, there are a few projects coming up that deal with acoustic space. In March 2018, organist Lola Wolf will perform a piece of mine, Quia Ergo Femina, an installation/concert piece for organ and electronics in St. Benedict’s Monastery in St. Joseph, Minnesota, which will heavily depend on the live processing of the acoustic characteristics of the monastery, using the techniques we discussed earlier. For this piece, I also wrote a code that simulates the sound production in an organ flue pipe. Tweaking with the parameters of this code allows me to create an “impossible pipe,” effectively creating a new space from a simulated, digitally represented space.

The second project deals with space delineated by wireless technology. I’m putting several wireless speakers around and sending a sound to them via Bluetooth, but I put them on the boundary of the range of the signal, getting a considerable distortion. I’m wondering: what is special about this distortion, musically speaking? It’s interesting how we perceive space as created by wireless technology. We felt it pretty graphically at Millay, didn’t we? So, that would be an example.

With Messenger of Sorrows, you are trying to represent a space that is being destroyed, but then there’s the space of the listener, when you are experiencing the sound, and then there’s your attempt to graphically represent the sounds without conventional notation.

I often experiment with different notational possibilities because conventional notation falls short in the representation of certain fluid processes in music. I’ll give you one example. In conventional notation, you could use the piano pedal in three ways: the pedal is up, you have pedal down, or you can get different effects if you have pedal in the middle, so you can notate these changed situations. In the piano piece Halki, I used a set of lines that goes everywhere between these three points. Now, practically, this doesn’t make sense because, well, the performer can totally move like that but the sound doesn’t change much; it doesn’t really matter if it’s higher than the middle, or if it’s lower than the middle.

The point is not to change sound here, but to attract the performer’s attention to the tiny movements she is making; if it were just one line going down, she would perceive it completely as a wholistic thing, as a pattern going down, but when it is going down and then there are these little movements in it, then her brain pays attention to these; this brings her perceptual focusing on the now without letting the brain automatically set up the patterns and processes. This evokes a state of being-in-the-here-and-now (and a serious frustration with the composer).

Alvin Lucier’s I Am Sitting in a Room influenced your conception of The Messenger of Sorrows. Can you say more about how this piece, or Alvin Lucier’s work in general, has been important to your thinking about, and experimenting with, sound and space?

Lucier’s artistic attitude is a source of inspiration in general because he managed to combine playfulness and expressiveness while challenging perceptions that are taken for granted. He mentions thinking of sounds as wavelengths instead of as high or low musical notes, for example. One can only imagine what is possible with this kind of consideration.

Regarding I Am Sitting in a Room . . . Reversing its process of decay in Messenger of Sorrows added an element of curiosity to the performance. I talked to several audience members after the performance, and they said slowly unveiling the source material created a sense of curiosity, a desire to know how the transformation will resolve.

Meanwhile, I had an interesting experience in the middle of that process. I could feel the existence of the folk song that would later be revealed, but I knew it was not actually there . . . yet. I wondered, what can I do with this experience? Imagine a song with which you are very familiar, and you apply the same process as in Messenger of Sorrows, effectively blurring the song. Will it bring a certain Proustian moment? Your memories come back, but you don’t know why . . . a sonic madeleine? Can we tap into the subconscious space of the audience this way? I will explore this in the future. So, yes, I can definitely say that Lucier’s work continues to inspire my work.